AI support bot for the Maks messenger: from cloud solutions to custom integration

Content

- Creating using services

- Creation with the involvement of integrator studios

- Custom development order from a software development and AI implementation studio

- Without using RAG

- Using RAG

- LLM Response Quality

- What to pay attention to

An AI support bot for a corporate or client messenger has ceased to be an experiment and is becoming a working business tool. Companies want to reduce response time, relieve the first line of support, unify communication and make the service available 24/7. If we are talking about the Max messenger, then the task usually boils down not only to creating a dialog interface, but also to integration with internal data, service scenarios, CRM, knowledge base and rules of escalation to a live operator.

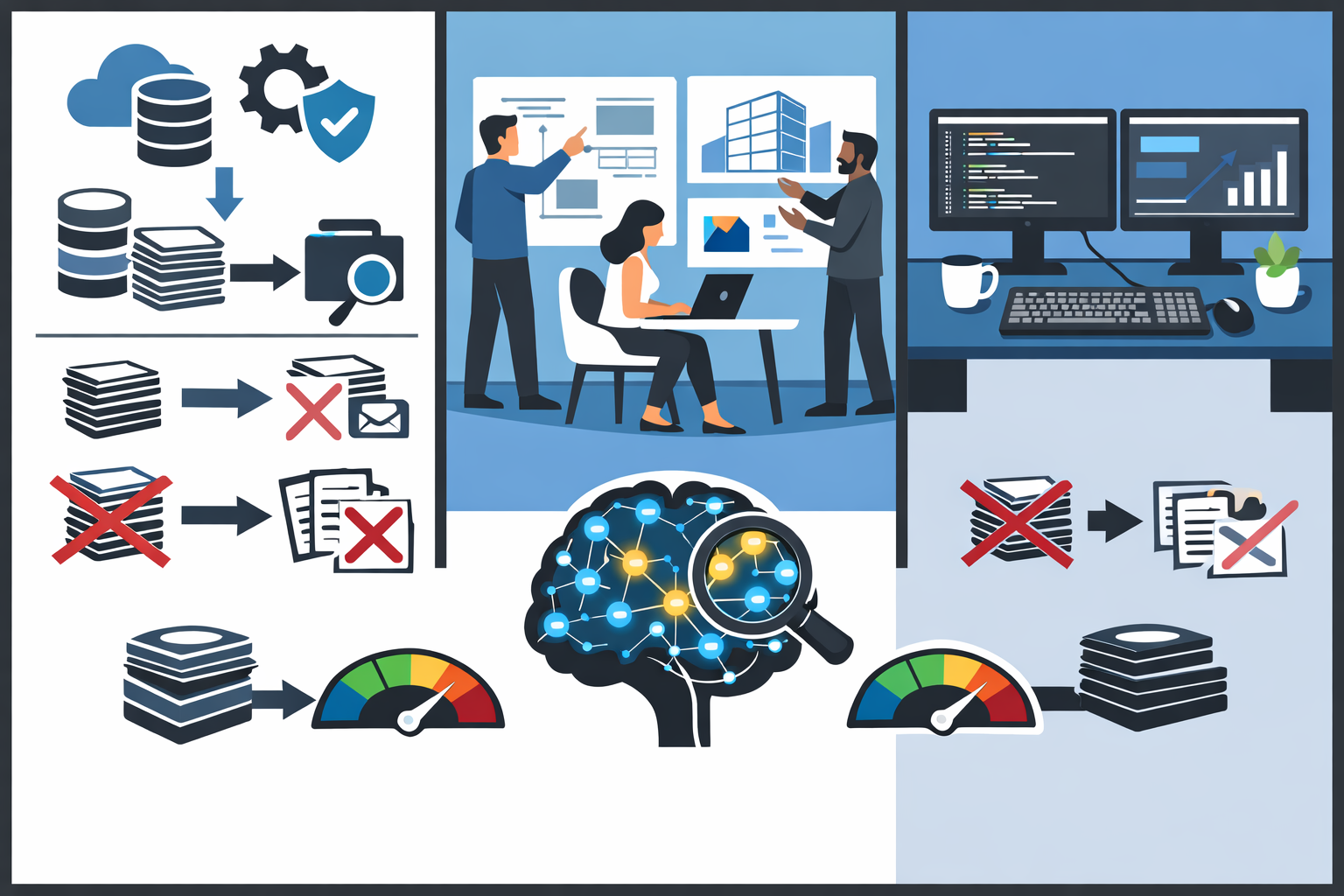

In practice, there are several ways to implement such a solution: from quick launch on ready-made platforms to full-fledged custom development with the integration of large language models and corporate knowledge sources. The choice depends on the budget, deadlines, security requirements, the expected quality of responses, and the level of control over the system. Below we will look at the main approaches and help you understand which one is suitable for your project.

Briefly

The AI support bot for the Maks messenger can be implemented in several ways: through ready-made services, with the help of integration studios, or in a custom development format. A quick launch usually provides the first option, a compromise between speed and adaptation is the second, and maximum flexibility and control is provided by the third. The choice depends on the budget, security requirements, the depth of integrations, and how critical the quality of responses is for the business.

If the task is limited to standard questions and primary routing of requests, you can do with a simple architecture and even temporarily abandon the complex knowledge search layer. If the bot has to respond based on a large and constantly updated database of documents, regulations and instructions, it is reasonable to consider the architecture with RAG. At the same time, the final result is determined not only by the model, but also by the quality of the data, escalation scenarios, response control system, and implementation maturity.

In practice, a good support bot is not just a "chat with a neural network", but a managed service tool. He must understand the boundaries of his competence, work correctly with internal sources of knowledge, transfer complex messages to a person and constantly improve based on real dialogues. That is why, even before the start of the project, it is necessary to determine the target cases, quality requirements and the level of acceptable risk.

Creating using services

The fastest way to launch an AI support bot is to use ready-made services and designers. These are platforms that allow you to build a bot without deep development: configure scripts, connect a language model, add an FAQ, integrate webhooks, and publish the solution in the desired communication channel. This approach is especially popular with companies that need to test a hypothesis and quickly launch an MVP without a long design cycle.

The advantage of the service approach is speed. Sometimes the first working bot appears in a few days or weeks. The business gets the opportunity to test real-world scenarios: answers to standard questions, routing of requests, automatic classification of requests, collection of contact data and transfer of complex cases to the operator. For small teams, this is often the most rational way to start, because it does not require assembling your own development team for the project.

However, this approach has limitations. The more the project moves towards non-standard logic, complex integrations, or special data protection requirements, the more noticeable the framework of the platform becomes. There are scenario limits, difficulties with integrating internal systems, dependence on tariffs, and limited control over how the AI part works. In addition, as the workload and requirements increase, the subscription price may increase faster than expected at the beginning.

When is this option particularly appropriate?:

- You need to quickly test an idea or launch a pilot.;

- Support is based on standard questions and template scenarios.;

- there are no strict requirements for deep customization;

- It is important to minimize initial investments.

If a company is just starting to automate support, the service format can be a good sandbox. But it should be considered as a stage, and not always as the final architecture.

Creation with the involvement of integrator studios

The second option is to contact an integrator studio that knows how to implement boxed and platform solutions for business tasks. In this case, the company gets not just access to the service, but a contractor who takes over setting up the logic, integration, adapting the bot to business processes and launching it into the work environment. For many organizations, this is a convenient compromise between "do it yourself on a designer" and "develop everything from scratch."

The strong point of integrators is that they already know the typical implementation errors. They help you think through scenarios faster, design referral routes, and set up data exchange with CRM, help desk, ERP, or internal catalogs. Often, an integrator can offer several levels of solution maturity: from a simple FAQ bot to support with generative AI, request analytics, and omnichannel logic.

But it's important to understand that an integrator studio almost always builds a solution around an existing stack. This means that some of the architectural decisions will be dictated by the platform's capabilities, partner products, and the team's experience. If a project needs unique logic or non-standard requirements for a model, knowledge repository, and quality control mechanisms, the integrator may find himself bound by the framework of the solutions he is working with.

In practice, this is a good choice for companies that need turnkey implementation, but without the cost of full-fledged custom development. This is often the path chosen by medium-sized businesses, where the cost of error is already high, and their own digital team does not want to be distracted by the infrastructural details of bot support.

An integrator is usually about speeding up implementation and reducing project risks, but not always about maximizing the flexibility of the architecture.

Custom development order from a software development and AI implementation studio

When a company needs not just a bot, but a strategic digital product, custom development comes to the fore. In this version, the project is tailored to specific processes, safety rules, internal systems, data sources, and quality metrics. This approach requires more time and budget, but it gives you a high level of control over the functionality, development, and intellectual part of the solution.

A studio that can simultaneously develop software and implement AI can design not only the communication interface in the Maks messenger, but also all the internal mechanics: query orchestration, routing by type of requests, LLM connection, response verification module, logging, analytics, admin panel, user roles and integration with internal services. This is no longer just a bot as an interface, but a full-fledged digital support system.

Custom development is especially justified when:

- There are complex product or service scenarios.;

- Deep integration with the company's internal systems is needed;

- Privacy, logging, and access control requirements are important.;

- We need to manage the quality of responses at the architectural level, not just at the industrial level.;

- The project is considered as a long-term asset, not as a temporary experiment.

The disadvantages are a longer launch cycle, mandatory analytics before development, the dependence of the result on the maturity of the contractor, and a higher investment threshold. But it is this path that most often leads to better results in complex B2B and enterprise scenarios. If support affects revenue, customer retention, or reputation, saving on architecture may be more expensive than the initial investment in high-quality implementation.

Without using RAG

Retrieval-Augmented Generation

A non-RAG approach has a right to life, especially if the support area is limited and well-formalized. For example, when a bot answers 20-50 standard questions, collects primary information on an application, helps choose a topic for an appeal, or sends the user along the desired route. In such scenarios, it is not always necessary to build a full-fledged knowledge base search system.

There is another advantage: architecture without RAG is simpler and cheaper to start with. Fewer components, lower cost of operation, easier maintenance and debugging. But this simplicity has a limit. As soon as the amount of knowledge grows, documents are updated frequently, and the accuracy of the answer becomes critical, the model without RAG begins to "think out" and make mistakes more often. This is especially dangerous in support, where incorrect advice can lead to a client's claim, loss of trust, or violation of regulations.

In other words, a bot without RAG is suitable for simple and controlled scenarios, but it rarely becomes a sufficient solution for mature support, where the relevance of information and reproducibility of responses are important.

Using RAG

If a project involves working with a changing knowledge base, internal instructions, regulations, product cards, a history of typical incidents, and a large amount of corporate information, an architecture with RAG usually becomes preferable. The essence of the approach is that before responding, the system extracts relevant data from the prepared knowledge repository and only then transmits it to the model to generate a correct and reasonable response.

For businesses, this means more predictable support. Instead of relying on the "memory" of the model, the company manages the sources from which the answer is built. This is especially important in scenarios where updates occur regularly: terms of service, tariffs, instructions, SLAs, internal regulations, and legal formulations change. When implemented correctly, RAG allows you to reduce the number of hallucinations and increase the proportion of responses that really rely on up-to-date data.

But implementing RAG is not a magic quality button. It is necessary to prepare the documents correctly, think about data chunking, metadata, search mechanism, context selection rules and generation restrictions. Sometimes a bad RAG turns out to be worse than its absence.: The bot gets irrelevant chunks, gets confused about document versions, or responds based on weak context. Therefore, success depends not only on the idea itself, but also on the quality of the engineering implementation.

What does RAG do in practice?:

- access to an up-to-date and manageable knowledge base;

- higher level of explainability of answers;

- reducing the risk of fictional facts;

- the ability to scale support without constant manual flashing of promptos.

This is especially useful for the Max messenger if the bot is supposed to be not just a dialog box, but a real digital first-line support employee.

LLM Response Quality

The quality of responses from a large language model is one of the key factors for the success of the project. Even the most beautiful integration and user-friendly interface will not help if the bot answers vaguely, evades the question, confuses the facts or formulates the answers in such a way that the user loses trust. Therefore, when choosing an architecture, it is important to evaluate not only the model itself, but also the entire environment in which it operates.

The quality is affected by several layers at once. The first is the LLM itself: its level, contextual window, resistance to instructions, ability to work in Russian, style of responses and predictability. The second is industrial engineering, which is how the system is assigned a role, rules, and response format. The third is the context: what data gets into the model, how relevant it is, and whether the response is overloaded with unnecessary information. The fourth is post—processing: fact checking, limiting wording, escalation rules, and moderation of risky responses.

In real projects, quality is usually measured not abstractly, but through specific metrics. For example, the percentage of correctly resolved requests without operator involvement, the average length of the dialogue before the decision, the percentage of escalations, CSAT, the number of false or dangerous responses, and the time to the first useful response. Sometimes, after launch, it is discovered that the bot is "polite but useless": it formally responds competently, but does not help the user come to a result. This is one of the most common hidden risks of generative interfaces.

It is a good practice to test quality on your own set of scenarios even before a large—scale launch. We need a list of typical and atypical requests, negative cases, conflict situations, issues with a lack of data, and requests where the bot is required to recognize the limitation and transfer the dialogue to the person. It is this kind of verification that shows the real maturity of the solution, rather than the demonstration effect.

What to pay attention to

When choosing an approach to creating an AI support bot for the Max messenger, it is important to look more broadly than at a beautiful demo presentation or the promise of "we will connect in a week." In a real implementation, the details are decided: from where the knowledge lies, to how the bot transmits the dialogue to the operator and who is responsible for the relevance of the content. The sooner these issues are worked out, the less risk there is that the project will turn out to be an expensive toy instead of a working tool.

First, it is necessary to determine the purpose of the implementation. If the task is to unload the first line, then the emphasis will be on typical scenarios, classification of requests and response speed. If the goal is to improve the quality of the customer experience, you will have to work more deeply with context, response quality, and omnichannel processes. If a bot is supposed to help employees within a company, then access rights, integration, and protection of internal data will become critical.

Secondly, you need to think through the boundaries of the bot's responsibility in advance. He shouldn't respond confidently where he doesn't have enough data. It is better to correctly limit the response and direct the user further than to create a false sense of competence. This is especially important for legal, financial, technical, and sensitive client scenarios.

Third, be sure to evaluate the project as a process, not as a one-time development. The knowledge base is outdated, business rules are changing, models are being updated, and new types of requests are emerging. This means that you need a product owner, regular dialogue analysis, a cycle of improvements, and a clear maintenance scheme. An AI support bot is not a box that has been turned on once, but a live system that requires management.

Before launching, it is useful to check the following questions:

- which scenarios should the bot close on its own, and which should be passed on to a human?;

- where does knowledge come from and who is responsible for its relevance?;

- how is the quality of responses and the business effect of implementation measured?;

- What are the requirements for data security, storage, and transmission?;

- how easy it is to scale the solution with increasing load and functionality.

Typical cases of using a support bot

The practical value of an AI bot becomes especially noticeable not in abstract demonstrations, but in repetitive work scenarios. It is where support staff answer the same questions daily, clarify the status of applications, help with basic product navigation, or collect raw data for further processing that a bot can have a measurable effect. It reduces waiting time, relieves some of the burden from operators and makes communication more stable.

In the Max messenger, such a bot can be used as the first line of contact. The user writes to the usual channel, and the system immediately accepts the request, determines its type, issues an initial consultation, and, if necessary, redirects the request to the appropriate team. For businesses, this means a reduction in the proportion of simple manual responses and a more rational allocation of specialist time.

The most common application cases look like this:

- answers to frequently asked questions about products, services, tariffs, statuses, and service rules;

- receiving and pre-qualifying requests before handing them over to the operator or the relevant department;

- help with onboarding new clients or employees through step-by-step tips;

- informing about the status of an application, order, request, or incident when integrating with internal systems;

- search for the necessary instructions, regulations, or knowledge base article based on the user's natural query;

- automatic routing of the dialog by issue type, priority, and customer category.

It is worth highlighting the internal cases separately. A support bot can be useful not only for customers, but also for company employees. For example, he is able to answer questions on internal regulations, IT support, personnel procedures, access, corporate services and typical operational actions. In large organizations, this allows you to reduce the volume of similar requests to service departments and speed up obtaining the necessary information.

If the project is built using RAG and integrations, the cases become even richer. A bot can not just respond in a template, but collect relevant context from the knowledge base, interaction history, and internal systems. Then it turns from an FAQ tool into an intelligent support interface that helps you solve queries faster, more accurately, and closer to the business context of the company.

As a result, the choice between a service, an integrator, and custom development depends not on the fashion for AI, but on the maturity of the task. A quick start on the platform is suitable for simple scenarios. More serious projects need to be implemented with an integrator. Critical support systems, where the quality of responses and knowledge management directly affect the business, most often require a custom architecture with thoughtful LLM work and, if necessary, a RAG component.